You may be familiar with the theory expounded in The Fourth Turning by William Strauss and Neil Howe. If you are not, you are excused: it’s after all the academic whitepaper version of the saying that strong men make good times, good times make weak men, weak men make hard times, hard times make strong men. Testing whether history really is a four stroke engine will be the topic of a future post: what matters here is that Howe and Strauss posit a secular cycle lasting about eighty years, the relevant time scale being set by the typical human lifespan. Throughout the cycle, different generational constellations –people that were children at a given stage now being middle-aged leaders, etc. - shape the collective psychology of the time, affecting the turn of events and setting history on a quasi-periodic trajectory.

As it turns out, eighty years is roughly the same duration as the periods into which science historians Lorraine Daston and Peter Galison subdivide the history of objectivity in their eponymous book. Their three periods cover roughly from 1780 to 2020, as follows: truth-to-nature from the late 18th to mid 19th century, mechanical objectivity from the mid 19th century to the early 20th century, and trained judgment from the early 20th century to the present.

Each period represents a distinctive way in which scientists interacted with scientific data in the course of their work. Understandably, Daston and Galison focus on image data and in fact restrict their scope even further, essentially to images that appear in scientific atlases: stuff like galaxy catalogs or botanic compilations. Historians need to work with actual documents that sit in archives. However, their conclusions have a much broader reach: each period supposedly has a distinctive scientific ethos, corresponding to rules and conventions delimiting what counts as legitimate data processing as opposed to unwarranted manipulation. In a sense, these rules define the boundaries of the scientific method of the time, acting as a demarcation line between science and quackery. The fact that they change over time suggests that portraying the scientific method as set in stone is misguided.

Truth-to-nature, the first period, is exemplified by the work of Goethe as a naturalist: following Daston and Galison’s account, his aim was to represent idealised, archetypical versions of the plants he observed and drew, abstracting away from individual variation. Other authors did not go as far as he did in pursuing his vision of metaphysical Urpflanzen underlying the multitude of individual specimens, but still considered it an acceptable practice to clean up and even beautify their data in ultimately subjective ways. One of the common sins of the time –obviously only a sin as judged anachronistically from our vantage point- was to assume symmetry where individual specimens were asymmetrical, as in the case of snowflakes.

Quoting from the book:

How did ideas like the Urpflanze become visible on the page? What did truth-to-nature look like? Early atlas makers did not all interpret the notion of “truth-to-nature” the same way. The words typical, ideal, characteristic, and average are not synonimous, even though they all fulfilled the same standardizing purpose. These alternative ways of being true to nature suffice to show that concern for accuracy does not necessarily imply concern for objectivity. On the contrary: extracting nature’s essences almost always required scientific atlas makers to mold their images in ways that their successors would reject as dangerously “subjective”. Because all these methods of discovering the idea in the observation clashed with objectivity, later atlas makers tended to lump them together as regrettable meddling with the data.

[…]

In eighteenth-century atlases “typical” phenomena were those that hearkened back to some underlying Typus or “archetype”, and from which individual phenomena could be derived, at least conceptually. The typical is rarely, if ever, embodied in a single individual; nonetheless the astute observer can intuit it from cumulative experience

If you think that this mindset is a thing of the past, forever relegated to the dustbin of history by the relentless march of scientific progress, think again. The struggle to find the Urpflanze is in fact alive and well in today’s astronomy. This is a quote from the author’s rebuttal for a paper I was refereeing a while ago (details redacted to make sure the author cannot be identified):

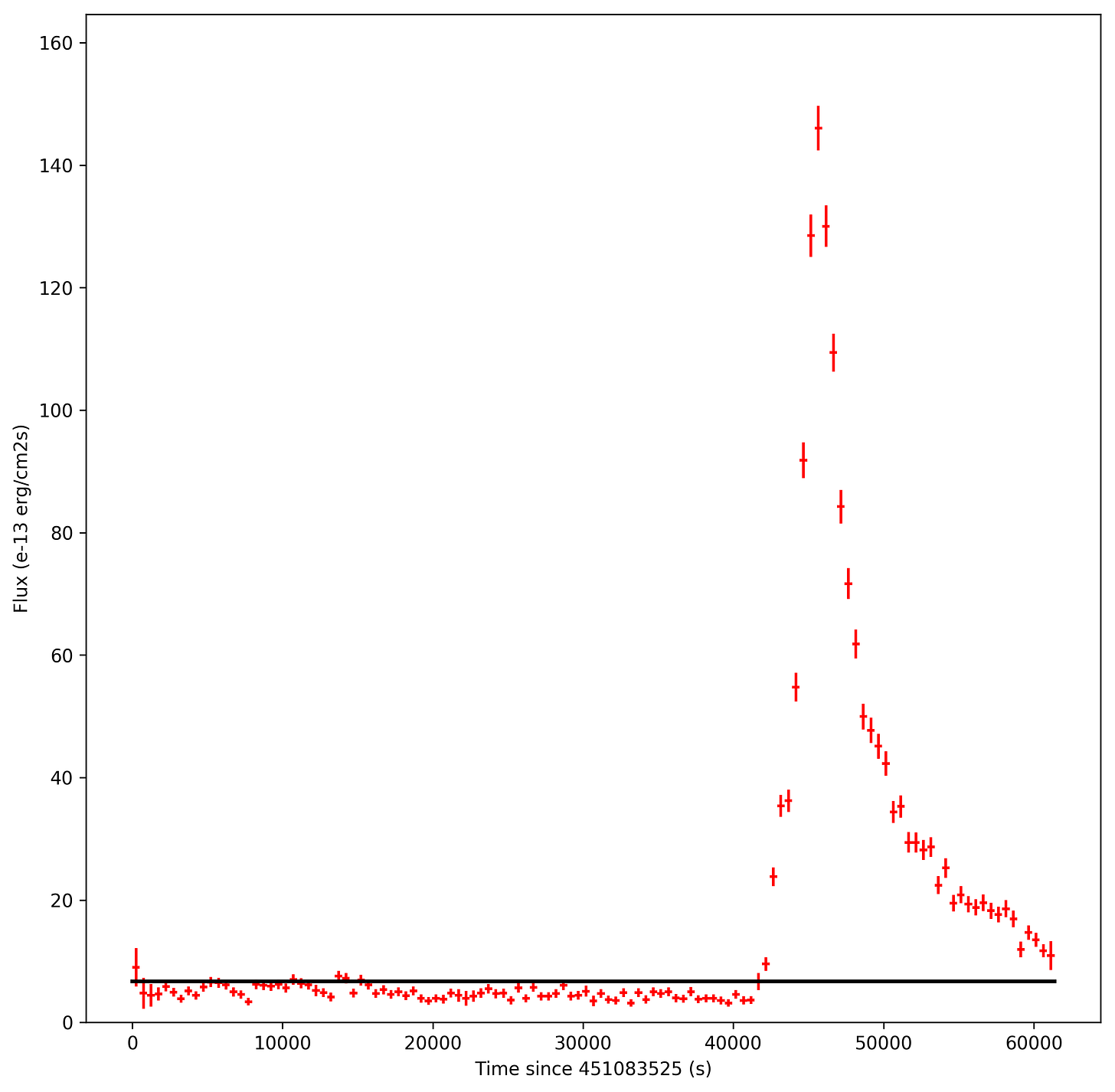

The choice of the binning size is somehow arbitrary because we verified that adopting intervals regular in radius or its log, or including a fixed number of stars, often brings to quite noisy profiles, with a consequent loss of information and the possible appearance of spurious features. This is because, in spite of hundreds stars measured over the entire radial extension, the statistics is relatively poor in each bin, especially if a reasonable radial sampling is aimed for. Our experience with "small number statistics" clearly demonstrates that an “adaptive” binning is the best choice to produce meaningful results with no loss of information due to stochastic noise.

Here the author argues that arbitrarily manipulating the bins of a histogram is allowable, as long as it gets us meaningful results, that is a density profile for a star cluster that is not noisy –presumably the true one. Before you scream scientific fraud, hold on a second to appreciate that they were very open about this. This is not some postdoc cutting corners because publish-or-perish: what we are looking at is a genuine clash of values.

In machine learning jargon, the days of truth-to-nature were a time in which scientists chose the bias side of the bias-variance tradeoff. By taking on a strong prior, they made their conclusions robust to the idiosyncratic variations of their data. As it happens with strong priors, this meant that at times their conclusions were later recognized as wrong, as in the case of Haeckel’s embryo drawings.

Given that the information provided by an informative prior does not, by definition, come from the data, it must come from somewhere else. If not from the object, then from the subject. But subjects vary too, hence truth-to-nature scientists, when wrong, were wrong in different ways: variance, kicked out of the door, came back through the window.

Suddenly, the Bachelardian idea that a psychoanalysis of objective knowledge is possible –necessary, even- does not seem that far-fetched anymore. It’s surprising that Daston and Galison –who nonetheless introduce the notion of a scientific self- do not mention Gaston Bachelard at all in their book1. In his The Formation of the Scientific Mind, Bachelard covers precisely the truth-to-nature period, showing how Enlightenment scientists such as Émilie du Châtelet were wrestling with inadequate priors (such as substantialism). These priors he dubs epistemological obstacles; see also my last post. They are roadblocks on the way to objective knowledge that do not reside in the empirical data themselves but rather in the limiting assumptions the scientist is relying on to make sense of the data, assumptions that are often implicit. Tellingly, the opening quote of Bachelard’s book The Psychoanalysis of Fire reads

Il ne faut pas voir la réalité telle que je suis

The solution found by scientists in the next period –mechanical objectivity- was thus to minimise the amount of human discretion in data collection and processing, favouring automatic techniques such as photography. Their goal was to let Nature speak in her own words instead of guessing at her intended meaning. Individual variation and even photographic artefacts became proof that the data was genuine rather than being treated as a nuisance as in the truth-to-nature period. Scientists embraced variance as a way of warding off bad priors.

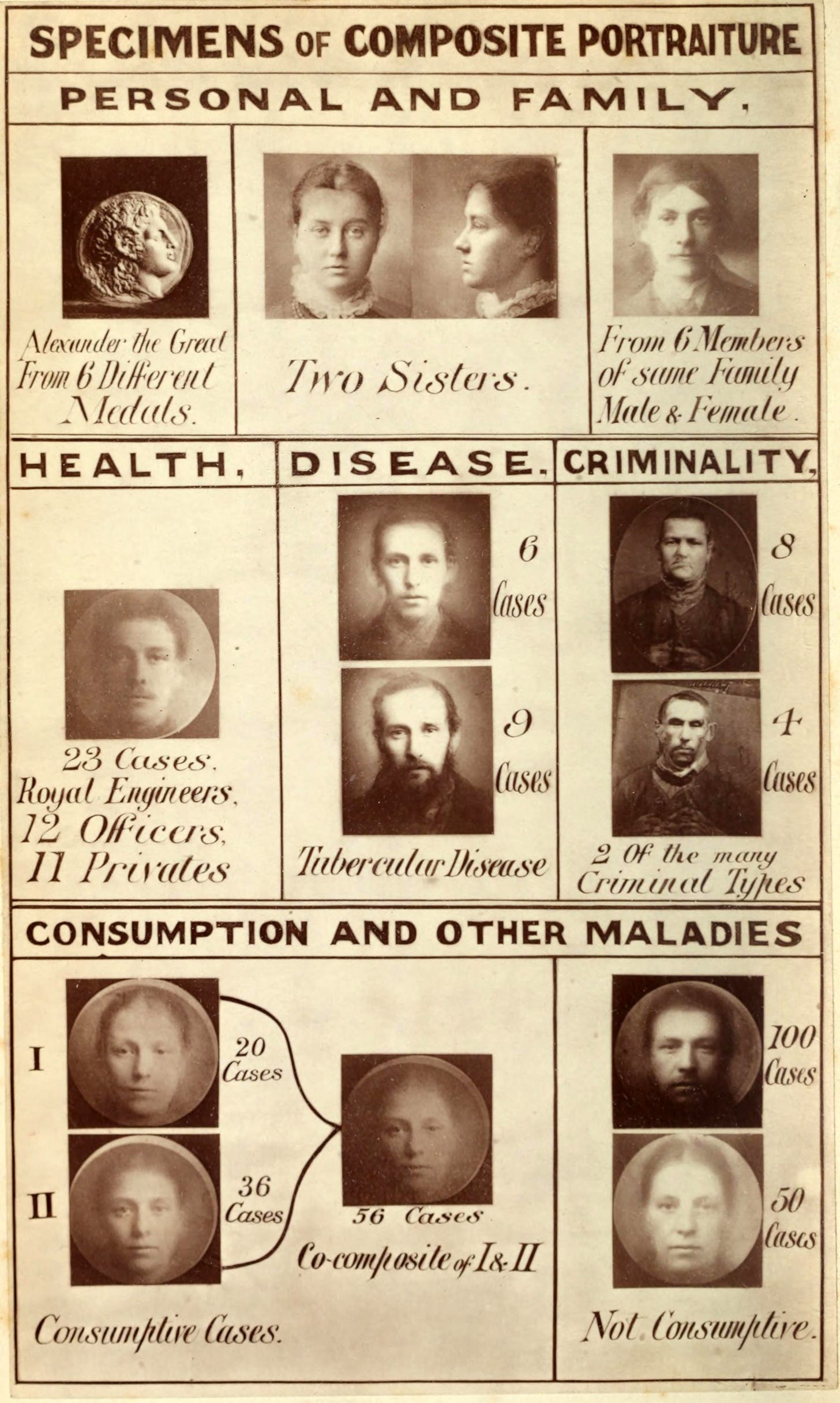

This is still largely what we consider the correct way to deal with scientific data today: share the raw data and be open about any transformation or manipulation introduced at any stage of the data processing. Interestingly, techniques that reduced variance without relying on an informative prior such as Galton faces –which are built by averaging a large number of face images and are a precursor of today’s eigenfaces- also date to this period.

The ideal of mechanical objectivity did not last longer than the previous one, though. In many situations, making do without a prior of sorts is simply impossible. If you ever had to do with actual empirical data you likely know what I am talking about: Nature is messy, and, without knowing what to look for, the message is inevitably lost in the noise. The solution to this shortcoming of mechanical objectivity is what Daston and Galison dub trained judgment: making sure that scientists share the same prior by giving them a common framework for interpreting data. Training becomes a way to standardise subjective judgment, to make subjective judgment reliable. This is what astronomers do when they teach a graduate student the art of data reduction. Trained judgment is history’s pendulum swinging back to the high bias side but, as it often happens, with a twist: high bias is now fine as long as we all have the same bias.

The era of trained judgment started about eighty years ago, some forty years into the twentieth century. This coincides with Michael Polanyi’s pivot from physical chemistry to philosophy. Polanyi is the philosopher of trained judgment if any exists. Suprisingly, again, he only gets mentioned in passing in Daston and Galison’s book, exactly once. Still, his work hits the nail on the head: go read The Tacit Dimension if you want to understand why trained judgment superseded mechanical objectivity. A blog post I wrote a while ago addresses this from a perhaps unnecessarily confrontational angle that does not matter here.

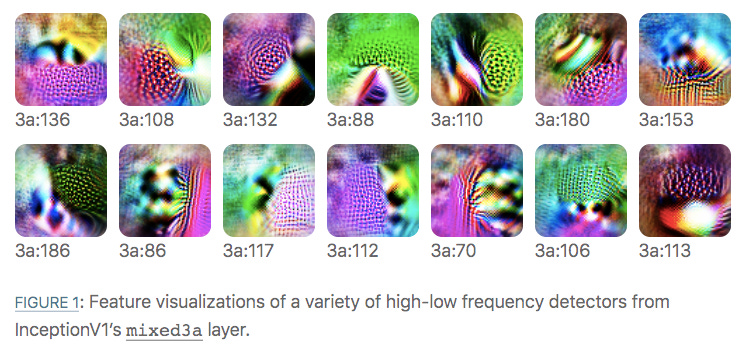

In short, scientific activity as we know it would be impossible in the absence of tacit knowledge: knowledge a trained scientist has, but cannot easily formalise and transmit. Scientists know more than they can tell. The training of students is a way to transfer this knowledge via teaching by example rather than through explicit instruction. Think about classifying galaxies in terms of morphological categories: I know how to do it, but if I wanted to teach you, I would need to point at galaxy images, tell you their class, and hope that you figure it out on your own. That’s what galaxy zoo has been doing for years, delivering this particular bit of scientific judgment training to the masses at an unprecedented scale.

If Strauss and Howe’s theory is right, the era of trained judgment is about to end too. What will come next? While it is difficult to make predictions and especially so about the future, my bet is that machine learning will be a key component of the coming era. One of the key features of the trained judgment era was that tacit knowledge could be stored only within a person’s skull, making people important. Theologians, and Catholic theologians in particular, love this feature of Polanyi’s philosophy: if key knowledge can be passed from one generation to the next only through a personal connection that involves trust, then even science cannot do without authority and tradition. The need to trust another person as a starting point for using one’s own reason is basically the credo ut intelligam of Augustine.

Today, it is no longer true that tacit knowledge is personal. We can train a neural network by showing it examples, exactly like we would a human. The knowledge thus stored is still tacit: the structure of the neural network is incomprehensible to us, unless we go to great lengths to dissect it like we would with a chimp’s brain. This is a real field of study called mechanistic interpretability, by the way. Although tacit, the knowledge stored in a trained neural network is essentially knowledge without a knower: actions with real consequences can be carried out automatically based on it, and it is far from clear who bears the responsibility for those actions.

The future may be scary but it won’t be boring.

unless they do and I missed it somehow; it’s certainly not in the index